Insights from the PMCR 2026 panel discussion

As firms across financial services continue navigating the realities of AI adoption, the PMCR 2026 panel on Building AI‑Ready Client Reporting Teams surfaced a refreshingly honest set of perspectives. Moderated by Sheetal Joshipura, VP, Head of Client Services at Matthews Asia, the session brings together three distinct lenses:

- Sheetal Joshipura, VP, Head of Client Services at Matthews Asia

- Joshua Hilbert, CIPM, Vice President at Cohen & Steers

- Brad Burgunder, Relationship Manager Kurtosys

The industry is at a turning point not only because of the technology itself, but because of how people respond to it.

Brad Burgunder - Relationship Manager, Kurtosys

1. The fear factor: ‘Is this coming for my job?’

This was one of the most emotionally resonant parts of the discussion and we wrote about it in our feature titled ‘Building a culture of creative problem-solvers in an AI world‘.

While I don’t believe AI will take jobs, it’s important for us to dive in and not be hesitant to experiment with AI.

Sheetal Joshipura - VP, Head of Client Services at Matthews Asia

The fastest path to meaningful AI adoption in financial services starts with personal use. Be curious, experiment. Find ways to be more efficient. And get rid of low-level tasks.

2. Financial services is behind but interested in possibilities

The technology world moved two and a half years ago, yet financial services are still at the starting line. The reason? Infosec and compliance. Most firms have implemented things like CoPilot but are cautious about jumping the gun. They have been active in their desire to not fall behind.

Many larger clients are in the process of going through approval boards, determining a Statements of Use and an ISO AI compliance framework. Medium sized firms have moved more quickly. One of our clients has added AI bots for their research teams and even hired an AI expert to help them build bots for other areas.

3. We moved on from efficiency gains. Now it’s quality control and operational risk

Of course, many AI conversations focus on speed and productivity, and this will still evolve for some time. But we’re moving on to another big value proposition. Arguably the biggest opportunity in AI is reducing operational risk. Because it’s a challenge to quality check every factsheet, every report. Human quality control isn’t scalable.

Could AI become the invisible layer that catches errors before they become brand or regulatory problems? But today? Ironically, AI is viewed as an operational risk in itself because of data governance uncertainties.

From one of our Kurtosys clients, a COO, the number one question isn’t ‘How do we save time?’ but ‘How do we stop really bad things from happening?’ This shift, from efficiency to safety, may become the industry’s way to get moving.

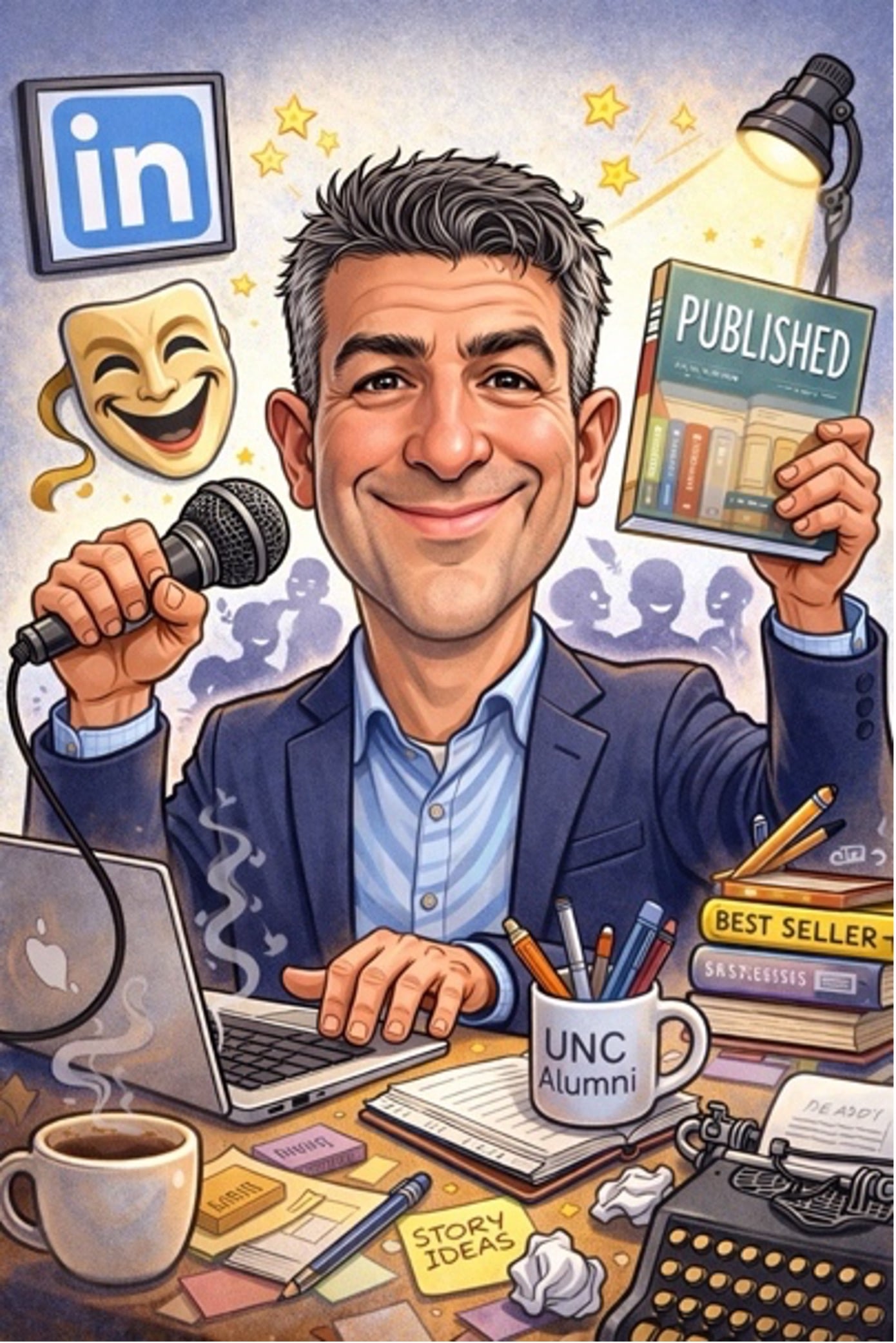

The ‘Human-in-the-loop’ reality check

While we discuss the power of AI to mitigate risk, we cannot ignore the ‘uncanny valley’. AI is a powerful co-pilot, but it still requires human oversight to navigate the nuances of financial data.

To illustrate this, look no further than a recent experiment I ran. I asked an AI to generate a caricature of me based on my LinkedIn profile. While it captured my role and my look, it also decided I needed three arms.

It’s a light-hearted example of a serious point: In client reporting, ‘80% correct’ is a failure. Whether it’s a caricature with too many limbs or a factsheet with a hallucinated decimal point, the technology is only as good as the human guiding it.

As we move toward AI-ready teams, our goal is to give the reporting team the extra arms they need to scale, provided they keep their eyes on the controls.

Our next article by Patrick McKenna will explore the specific Kurtosys features that provide these guardrails for asset managers.

FAQ:

Building AI-ready reporting teams: Frequently asked questions

- What were the key takeaways from the PMCR 2026 panel on AI adoption?

The consensus among industry leaders (including representatives from Matthews Asia and Cohen & Steers) is that the focus has shifted from mere efficiency to operational risk mitigation. While AI provides massive productivity gains, the industry standard remains zero-tolerance for error; in financial reporting, 80% accuracy is a 100% failure.

- How does AI help reduce operational risk in asset management?

AI acts as an invisible layer of quality control. By performing automated health checks across thousands of data points in factsheets and reports, AI can flag anomalies and outliers that are impossible for humans to spot at scale. This allows teams to shift from manual checking to strategic oversight, catching potential errors before they reach clients or regulators.

- Why are mid-sized asset managers adopting AI faster than larger firms?

Smaller, more nimble firms often benefit from shorter approval cycles for Infosec and compliance. While larger institutions are currently building complex ISO AI compliance frameworks and ‘Statements of Use’, mid-sized firms have been quicker to embed specialized AI bots into research and reporting workflows to gain a competitive edge.

- What is the human-in-the-loop model, and why is it critical?

This model ensures that AI augments human expertise rather than replacing it. In this framework, AI handles the ‘grunt work’ such as generating first drafts of commentary or complex charts, while a human expert remains at the centre to audit the output, manage potential hallucinations, and ensure the final report meets rigorous accuracy standards.

- How important is data quality for a successful AI strategy in finance?

Data is the bedrock of AI. The old adage ‘garbage in, garbage out’ is especially true in finance; without a clean, centralised data warehouse, AI tools cannot provide reliable insights. Most firms are now prioritising data infrastructure and cleansing as a prerequisite to scaling their AI initiatives.